Codex 5.4 vs Claude Opus 4.6

Finally, I got my hands on the long-promised "best coding model" — Codex 5.4.

Naturally, curiosity kicked in.

Instead of asking it random coding questions, I decided to run a small experiment. I asked two frontier coding models — Codex 5.4 and Claude Opus 4.6 — to build the exact same product.

For extra curiosity, I also added Codex 5.2 to the test.

The setup was simple: same task, same prompt, same constraints.

No follow-ups.

Just pure first-attempt execution.

The Task

Build a Product Feedback Board web app with:

- voting

- comments

- search

- filters

- drag-and-drop Kanban columns

Everything had to live inside a single React + TypeScript app.

No external backend.

Just a compact but realistic SaaS-style product.

Timing

Here's how long each model worked before producing the result:

- Claude Opus 4.6 — 5:56

- Codex 5.4 — 6:15

- Codex 5.2 — 5:25

The timing difference was small.

What mattered more was how they behaved while building.

Planning

All models came up with a decent plan.

None of them actually validated that plan with me before implementation.

That's interesting in itself: coding models tend to optimize for momentum, not alignment.

Opus plan:

- Types — Post, Comment, Category, Status, SortOption + constant arrays

- Seed data — 12 items, all categories/statuses covered

- State — useReducer + Context, localStorage sync in useEffect

- FilterBar, Board, Column, Card, PostModal, PostForm (shared for create/edit)

- Drag & drop — native HTML DnD, card sets ID on drag, column dispatches MOVE_POST on drop

- Styling — single CSS file, CSS variables, 3 responsive breakpoints

- Accessibility — dialog, aria-* attrs, focus-visible, keyboard handlers

- README — setup + architecture rationale

Codex 5.4 plan:

- Define app structure, domain types, seeded sample data, and state/persistence approach

- Implement the React app, responsive UI, filtering/sorting/search, CRUD, comments, upvotes, and drag-and-drop

- Add README and verify the codebase for completeness and consistency

Opus felt more explicit and architectural.

Codex 5.4 felt more compact and execution-oriented.

Human-in-the-Loop (HITL)

This is where the behavior difference became much more visible.

Claude Opus 4.6

During implementation, Opus asked for 10+ confirmations before running commands, changing files, or making structural decisions. It felt a bit excessive at times. But it also made the process feel controlled.

Codex 5.4

Codex 5.4 asked once and then kept going.

Codex 5.2

Codex 5.2 asked nothing.

So the spectrum was pretty clear:

- Opus = cautious

- Codex 5.4 = mostly autonomous

- Codex 5.2 = fully autonomous

Personally, I found Opus slightly too careful, but still preferable.

The Codex models felt a bit too independent and too willing to decide on their own.

Running the Project

Then I asked each model to run the project locally.

- Opus 4.6 — no problem, ran instantly

- Codex 5.4 — same, no issues

- Codex 5.2 — failed to run its own project the first two times

That was the first obvious reliability gap.

Final Result

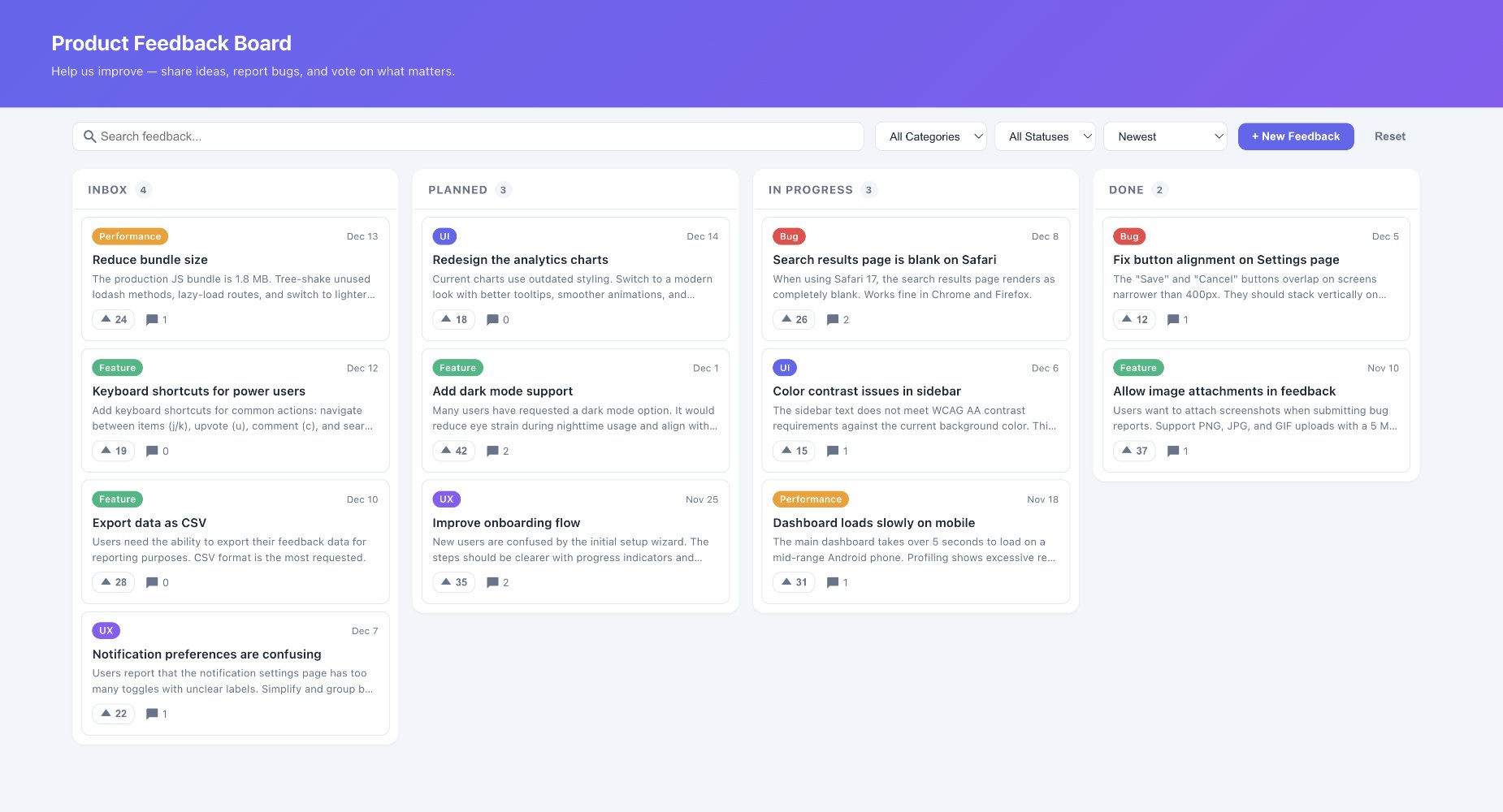

Claude Opus 4.6

The UI had slightly smaller fonts, but overall it looked clean, structured, and usable.

Claude Opus 4.6 — clean and structured UI

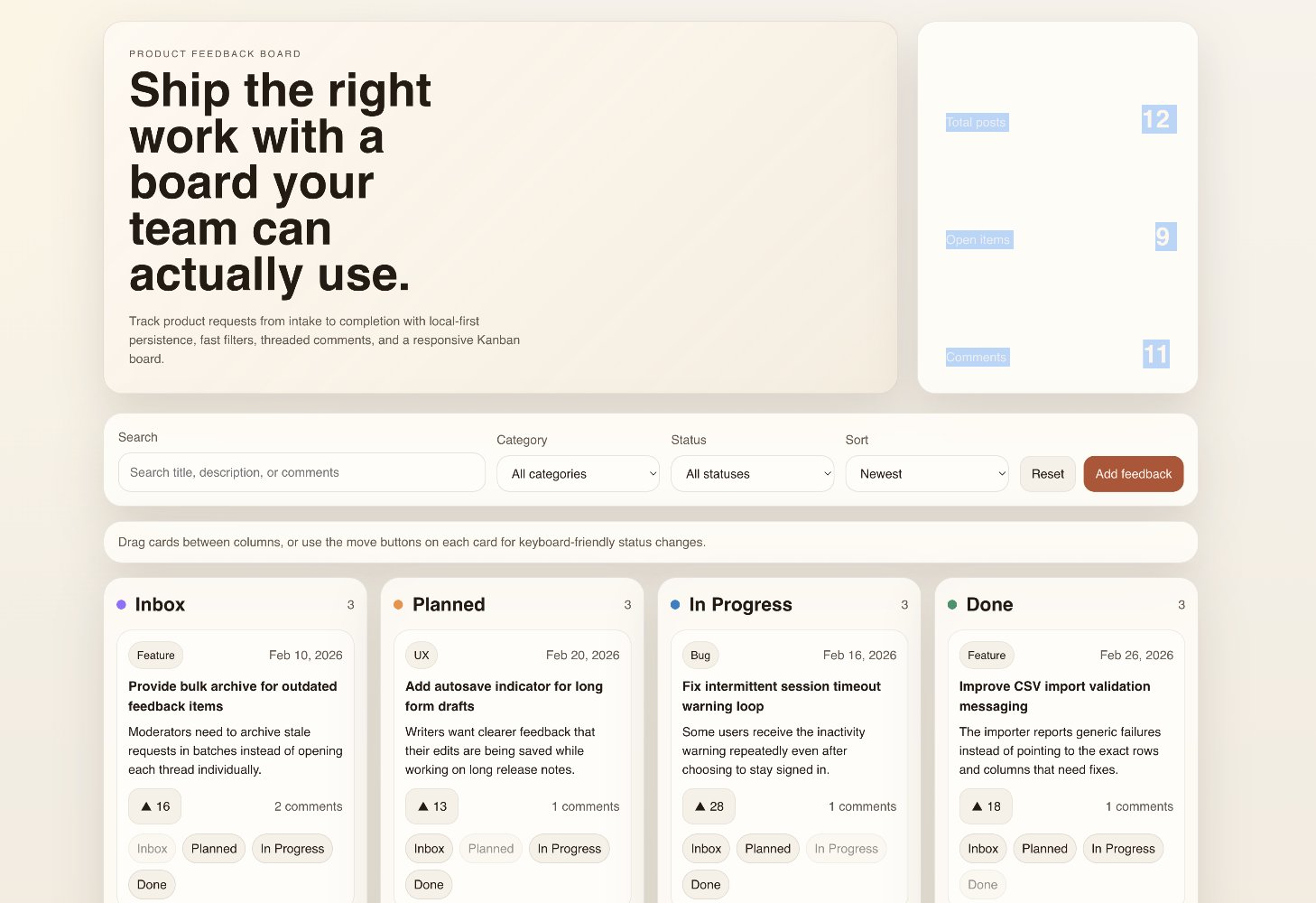

Codex 5.4

The UI felt bigger and a bit oversized. One block in the upper-right corner was completely white — apparently white text on white background. Oops.

Codex 5.4 — bigger UI with a white-on-white bug

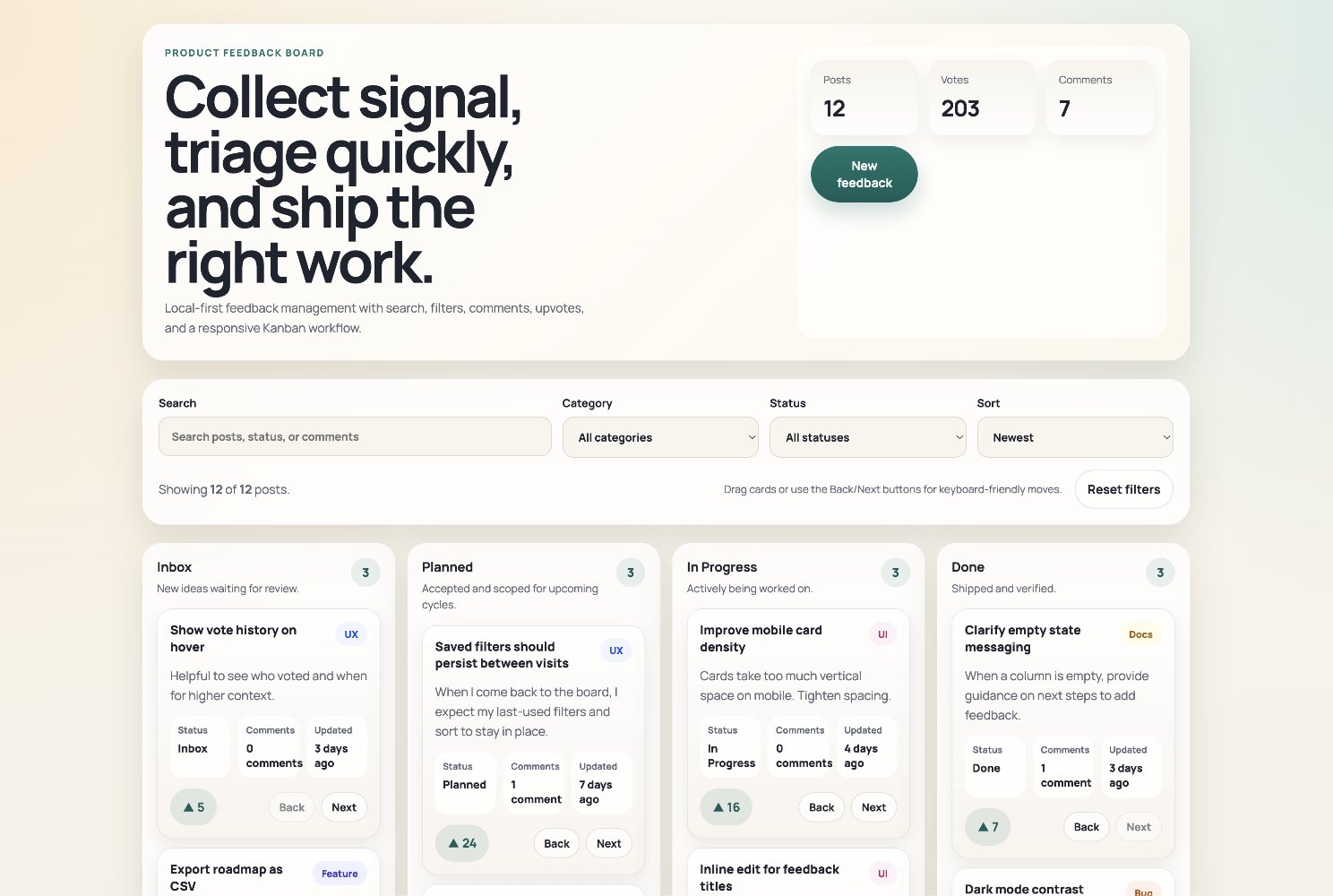

Codex 5.2

Funnily enough, it used a very similar style and color scheme to Codex 5.4, just in a more naive and simplified way.

Codex 5.2 — similar style, more simplified

What Stood Out

A few patterns became clear.

- Planning: Opus produced the more detailed and structured plan

- Control: Opus stayed much closer to human-in-the-loop workflow

- Autonomy: Codex models felt socially smoother, even if not much faster technically

- UI quality: Opus still felt more polished

- Reliability: Codex 5.4 was solid, Codex 5.2 less so

Conclusion

For now, I would still choose Claude Opus 4.6 for this kind of coding work.

Not because it destroyed Codex 5.4.

It didn't.

But because the combination of:

- stronger UI taste

- more explicit planning

- better controlled execution

still makes it feel more dependable.

Codex 5.4 is clearly strong.

Very strong, actually.

But it still felt a bit too confident in its own choices.

That's great when you want autonomy.

Less great when you want predictability.